Nvidia’s Breakthroughs at CES 2026: Vera Rubin, AI Platforms & Future‑Ready Ecosystems

Nvidia’s presence at CES 2026 has been one of the show’s defining stories, as the company unveiled a suite of technological advancements that collectively reveal its strategy for leading the next wave of AI computing. At the heart of this strategy is the Vera Rubin platform, a radical reimagining of how AI infrastructure operates — moving from isolated chips toward tightly integrated, rack‑scale AI supercomputing systems.

Named after American astronomer Vera Rubin, whose work transformed our understanding of dark matter, the platform represents Nvidia’s biggest leap in AI hardware design. Rather than releasing individual AI chips in isolation, Nvidia has developed a cohesive ecosystem that integrates multiple components — including Rubin GPUs, Vera CPUs, high‑speed NVLink networking, SuperNIC accelerators, and AI‑native storage — into a unified architecture optimized for both training and inference.

This extreme co‑design philosophy — where all pieces are built to work seamlessly together — allows the Rubin platform to dramatically improve performance and efficiency. According to Nvidia, the new system can deliver AI tokens at a fraction of the cost and with substantial throughput gains over previous generations, addressing one of the biggest bottlenecks in scaling large‑scale AI applications.

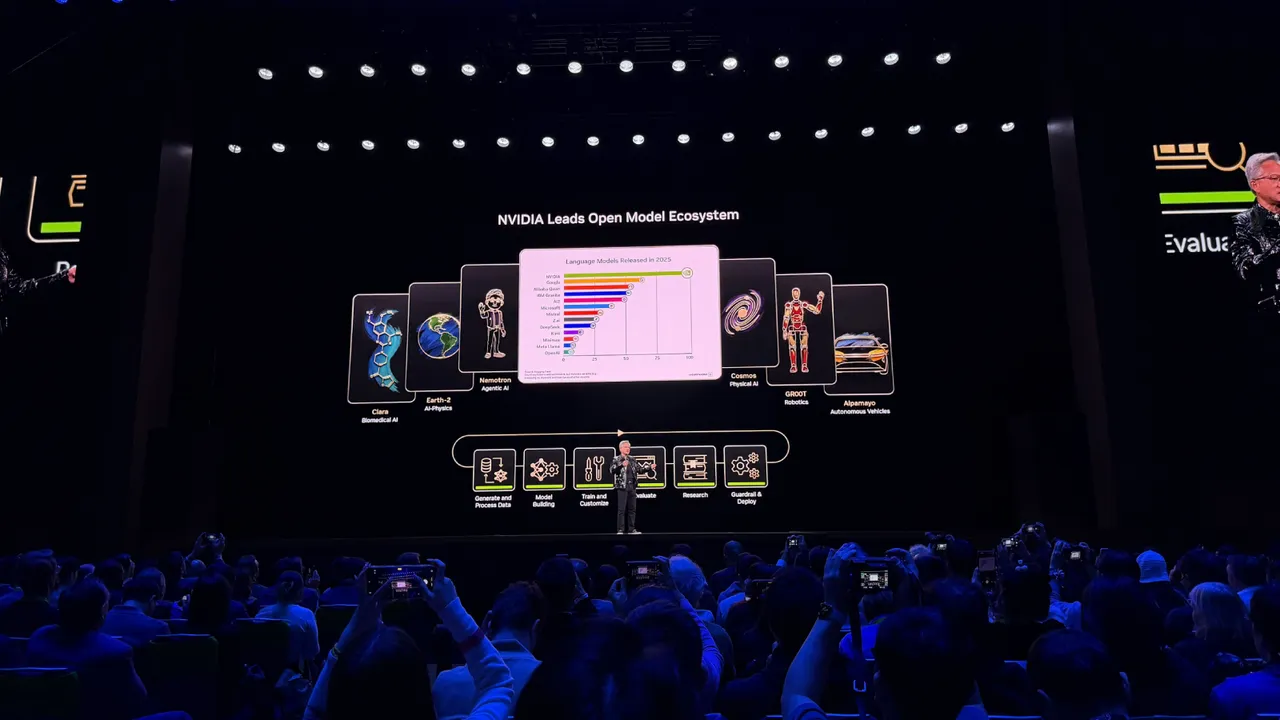

Another cornerstone of Nvidia’s CES announcements was a renewed emphasis on open models and software ecosystems. Nvidia introduced a suite of open AI models trained on its own supercomputers spanning domains such as healthcare, climate science, robotics, and autonomous driving. By making these models available publicly, Nvidia is betting that collaborative development will accelerate the adoption of frontier AI technologies across industries.

One particularly ambitious aspect of the company’s vision is the push into “physical AI”, where AI systems are used not just for digital tasks but to control robots, vehicles, and edge machines. Simulation‑driven training — using tools like Nvidia’s Cosmos world model — enables machines to learn in virtual environments before interacting with real‑world contexts, a crucial step for scaling embodied intelligence.

On the consumer and creative side, Nvidia also showcased enhancements to its gaming and creative software stacks. For example, the upgraded DLSS 4.5 brings advanced transformer‑based AI upscaling to gaming, improving visual fidelity and performance for hundreds of titles. Advanced displays such as G‑SYNC Pulsar panels demonstrated how real‑time responsiveness and AI features can push gaming experiences even further.

Perhaps most importantly, Nvidia’s announcements underline a broader thesis: AI isn’t just software anymore — it’s infrastructure, hardware, and physical systems all at once. By building comprehensive platforms that encompass chips, networking, storage, software, and open‑model ecosystems, Nvidia is positioning itself as both a technological backbone and an innovation partner for organizations tackling the most demanding AI applications of the next decade.